Automated security code reviews with Claude Code and GitHub Actions: How we did it at Deriv

Inside Deriv’s workflow for AI-powered pull request security reviews, interactive PR fixes, and safer software delivery at scale

TL;DR We built an automated security reviewer using the Claude Code GitHub Actions, plugged directly into our CI/CD pipeline. Every pull request now gets a structured security scan — vulnerabilities, logic bugs, and code quality issues — posted as a PR comment before any human reviewer sees it. Developers can also interact with Claude directly in the thread: ask questions, request fixes, or have it generate a secure fix PR. Rolled out across four GitHub organisations and already catching things humans miss.

The problem: Security reviews don’t scale

If you’ve ever worked in a security team, you know the bottleneck. Every PR needs a review. Every review takes time. And the more repositories you manage, the more things slip through the cracks.

At Deriv, we manage 700+ repositories across 5 GitHub organisations. That’s roughly 100+ pull requests per week. Manual security reviews were slowing us down, and worse: inconsistency crept in. Some PRs got deep reviews. Others got a quick glance and a rubber stamp.

We needed something that could review every single PR with the same level of scrutiny, flag real issues, and do it without adding friction to the developer workflow.

So we built it.

The solution: Claude Code as your security reviewer

Claude Code is Anthropic’s command-line tool for agentic coding. But what most people don’t know is that it also ships as a GitHub Action, meaning you can plug it directly into your CI/CD pipeline.

Claude Code GitHub Actions automatically scans every pull request and posts a structured security review — severity rating, CWE classification, vulnerable code snippet, and a ready-to-use fix — directly as a PR comment, before any human reviewer sees it.

We configured two workflows:

Automated security review: Claude scans every PR on open/sync/reopen and leaves a detailed security review as a comment.

Interactive AI assistant: Developers can mention @claude in any PR comment to ask questions, request fixes, or even have Claude create a fix PR.

The result? Every PR to main or master now gets an AI-powered security review automatically. No extra steps for the developer. No bottleneck from the security team.

How automated security code reviews work in GitHub Actions

Phase 1: Automated pull request security scans with Claude Code

When a developer opens a pull request, our claude-code-review.yml workflow triggers automatically. Claude Code reads the diff, analyses the changes, and posts a comprehensive security review directly on the PR.

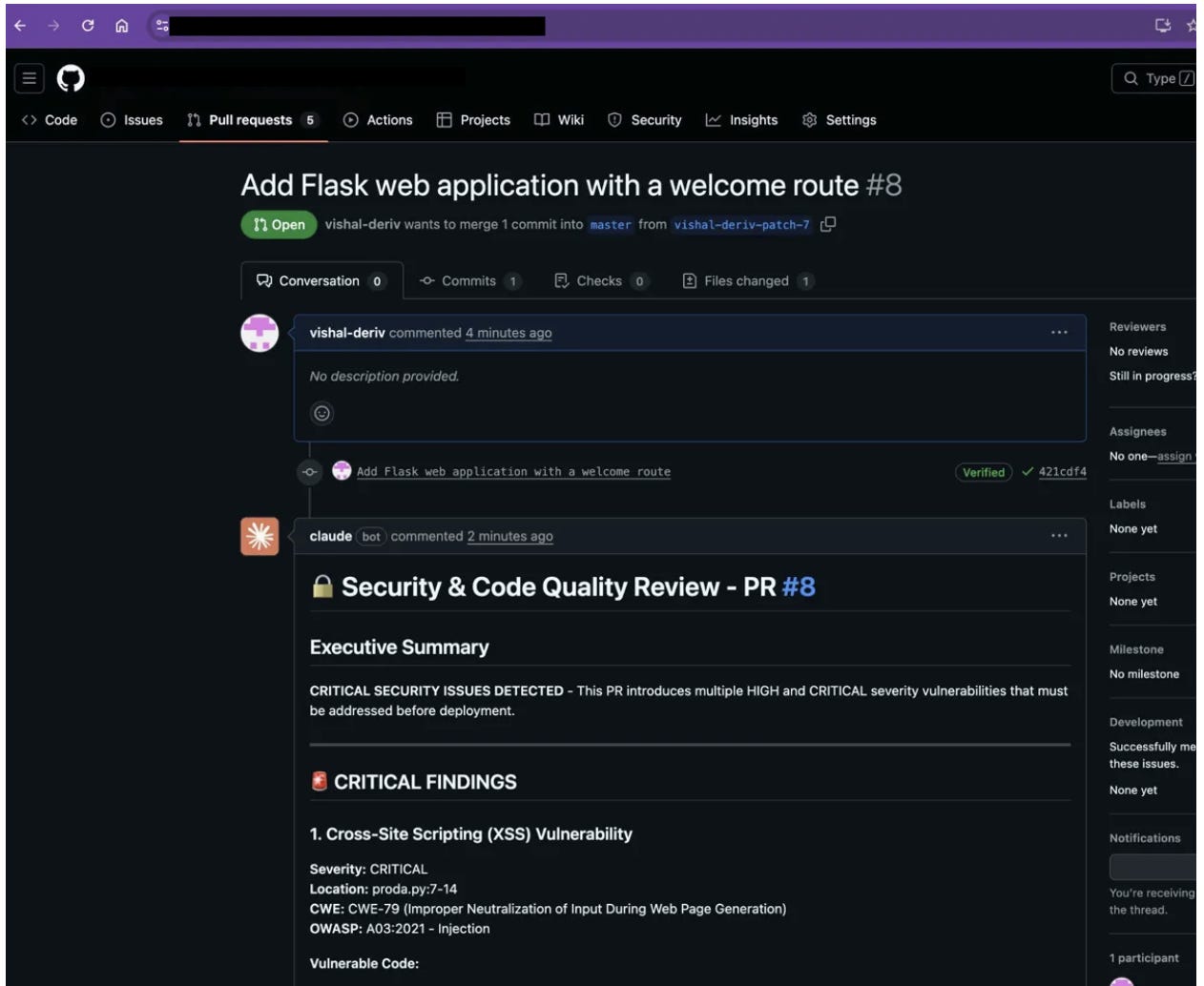

Here’s what a real review looks like:

The review is structured and actionable. For each finding, Claude provides:

Severity: CRITICAL, HIGH, MEDIUM, or LOW

Exact location: file and line number

Vulnerable code snippet: so the developer sees exactly what’s wrong

Exploit scenario: how an attacker could abuse it

Fixed code snippet: a ready-to-use remediation

CWE/OWASP reference: for compliance and tracking

This isn’t a generic ‘be careful with user input’ warning. It’s a specific, contextual, exploit-aware analysis.

Phase 2: Interactive PR security fixes with Claude Code

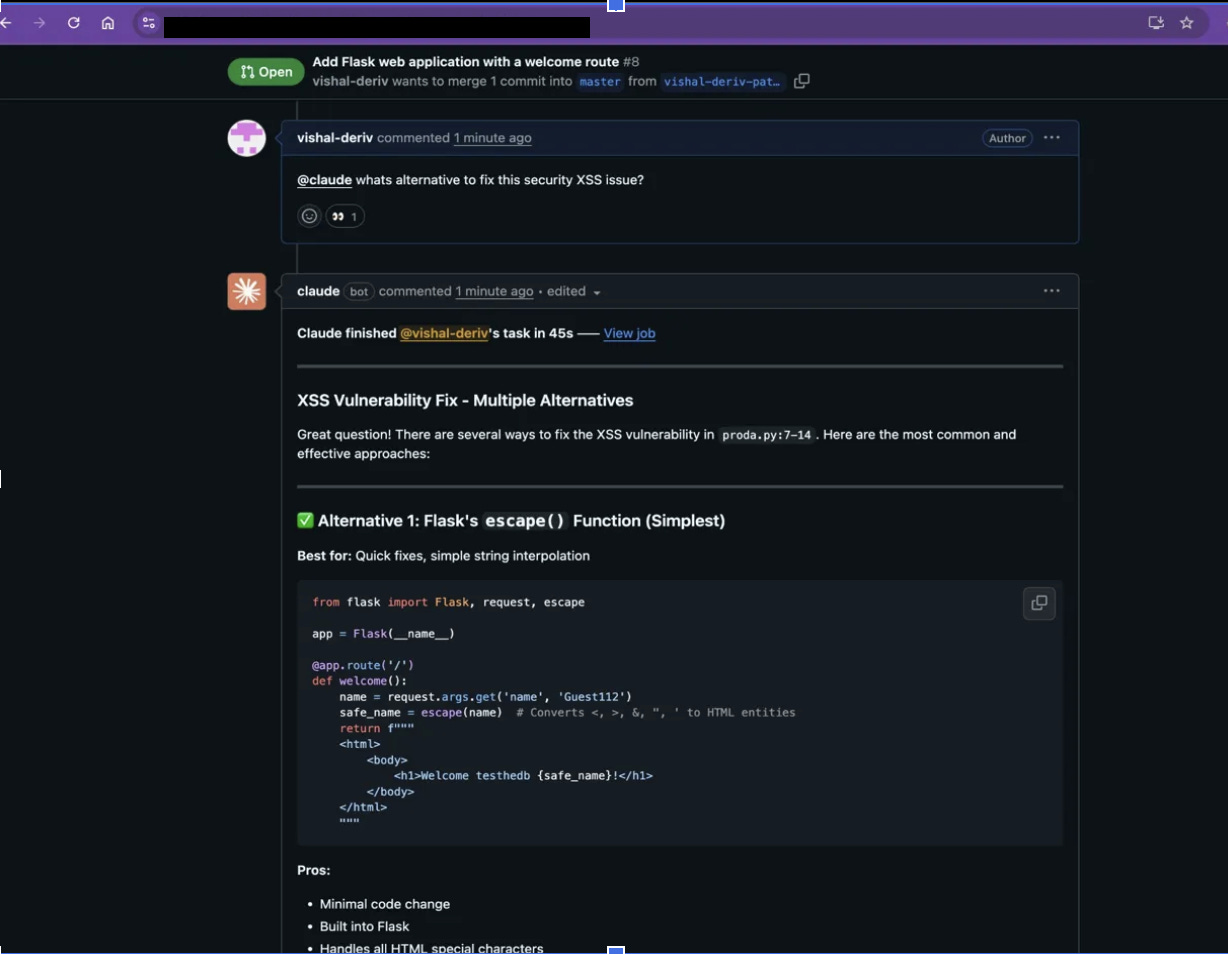

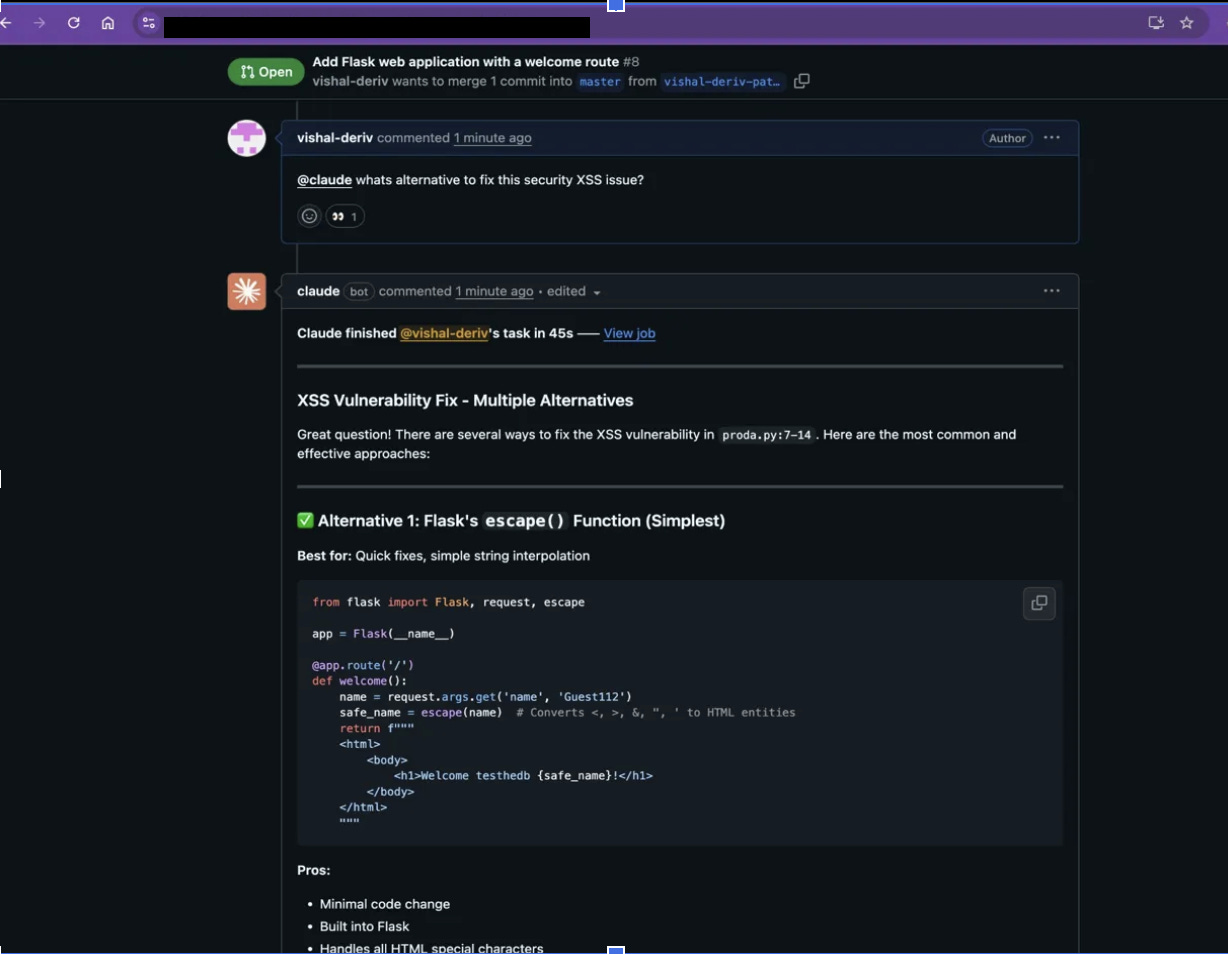

Here’s where it gets interesting. Developers don’t just passively receive the review; they can have a conversation with Claude right in the PR thread.

Want to know what alternatives exist for fixing an XSS issue? Just ask. Claude doesn’t give one answer. It presents multiple remediation strategies, from a quick escape() fix to Jinja2 templates, input validation, and CSP headers, each with a clear comparison on ease of use, security strength, and scalability.

Phase 3: How Claude Code generates secure fix PRs

This is the part that surprised even us. When a developer asked Claude to create a PR with the security fixes, it actually did it.

Notice the key detail: Claude doesn’t just push code. It prepares everything and then asks for approval before executing git operations. The developer stays in control.

The fix itself was solid: replacing raw f-string HTML rendering with render_template_string, switching debug mode off, and binding to 127.0.0.1 instead of 0.0.0.0. Real security improvements, not cosmetic changes.

GitHub Actions workflow files for automated security reviews

Setting this up is straightforward. You need two workflow files in your .github/workflows/ directory. Full code is available at github.com/deriv-security/claude

1. Automated review: claude-code-review.yml

This workflow triggers on every PR event and runs a comprehensive security scan:

name: Claude Code Review

on:

pull_request:

types: [opened, synchronize, ready_for_review, reopened]

jobs:

claude-review:

runs-on: ubuntu-latest

permissions:

contents: read

pull-requests: read

issues: read

id-token: write

steps:

- name: Checkout repository

uses: actions/checkout@v4

with:

fetch-depth: 1

- name: Run Claude Code Review

id: claude-review

uses: anthropics/claude-code-action@v1

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

prompt: |

REPO: ${{ github.repository }}

PR NUMBER: ${{ github.event.pull_request.number }}

You are conducting a comprehensive security and code quality

review. Analyze with extreme scrutiny.

## Your Mission

Find EVERY security vulnerability, logic error, performance

issue, and code quality problem.

## Priority Order

1. Security vulnerabilities (RCE, injection, auth bypass)

2. Logic bugs that cause incorrect behavior

3. Performance issues causing slowness/crashes

4. Code quality reducing maintainability

## For Each Finding Provide:

- Severity (CRITICAL/HIGH/MEDIUM/LOW)

- Location (file:line)

- Vulnerable code snippet

- Exploit scenario

- Fixed code snippet

- CWE/OWASP reference

## Focus Areas:

✓ Input validation and sanitization

✓ Authentication and authorization

✓ Injection vulnerabilities (SQL, Command, XSS)

✓ Cryptographic security

✓ Sensitive data exposure

✓ Error handling and logging

✓ Race conditions and concurrency

✓ Resource management (memory, connections)

✓ API security (rate limiting, CORS)

✓ Dependency vulnerabilities

Be direct. Flag everything suspicious. Provide actionable fixes.

Use the repository's CLAUDE.md for guidance on style and

conventions.

Use `gh pr comment` with your Bash tool to leave your review

as a comment on the PR.

claude_args: >-

--allowed-tools

"Bash(gh issue view:*),Bash(gh search:*),

Bash(gh issue list:*),Bash(gh pr comment:*),

Bash(gh pr diff:*),Bash(gh pr view:*),

Bash(gh pr list:*)"2. Interactive assistant: claude.yml

This workflow enables the @claude mention feature for interactive conversations:

name: Claude Code

on:

issue_comment:

types: [created]

pull_request_review_comment:

types: [created]

issues:

types: [opened, assigned]

pull_request_review:

types: [submitted]

jobs:

claude:

if: |

(github.event_name == 'issue_comment' &&

contains(github.event.comment.body, '@claude')) ||

(github.event_name == 'pull_request_review_comment' &&

contains(github.event.comment.body, '@claude')) ||

(github.event_name == 'pull_request_review' &&

contains(github.event.review.body, '@claude')) ||

(github.event_name == 'issues' &&

(contains(github.event.issue.body, '@claude') ||

contains(github.event.issue.title, '@claude')))

runs-on: ubuntu-latest

permissions:

contents: read

pull-requests: read

issues: read

id-token: write

actions: read

steps:

- name: Checkout repository

uses: actions/checkout@v4

with:

fetch-depth: 1

- name: Run Claude Code

id: claude

uses: anthropics/claude-code-action@v1

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

additional_permissions: |

actions: readThat’s it. Two files, one API key, and every PR in your organisation gets a security review.

The setup requires two workflow files in your .github/workflows/ directory and one API key. That’s enough to give every pull request in your organisation an automated security review.

What vulnerabilities and code issues Claude Code catches

In our first week of deployment, Claude flagged issues across multiple categories:

Security vulnerabilities: XSS through unsanitised user input, debug mode left enabled in production, applications binding to 0.0.0.0 instead of localhost, missing input validation, and hardcoded secrets.

Code quality issues: Missing error handling, inefficient database queries, unused imports, and inconsistent naming conventions.

Logic bugs: Race conditions in async operations, off-by-one errors in pagination, and incorrect error propagation.

The XSS finding above is a real example. A simple Flask app was rendering user input directly into HTML with an f-string, a textbook reflected XSS vulnerability. Claude caught it, classified it correctly (CWE-79, OWASP A03:2021), and provided multiple remediation paths.

Claude Code detects injection vulnerabilities, hardcoded secrets, debug mode left enabled in production, missing input validation, race conditions, and logic bugs with exact file and line locations, exploit scenarios, and remediation code for each finding.

How repo admins can manage PR blocks and false positives

By default, any confirmed security finding will block the PR from merging. This is intentional.

If a bypass is required — say, for a confirmed false positive — add a clear comment explaining the reason. This feedback loop matters: it helps the system avoid reporting the same false positive in the future.

Please don’t bypass PR blocks without an explanation. The system gets better with your input.

Lessons from deploying AI-powered security code reviews at Deriv

1. AI reviews complement, not replace, human reviews. Claude catches the pattern-matching stuff, such as known vulnerability classes, common misconfigurations, and OWASP Top 10 issues, with perfect consistency. But it doesn’t replace the human reviewer who understands business logic, threat models, and organisational context. Use both.

2. Interactive is better than static. The automated scan is valuable, but the real value is the interactive @claude feature. Developers can ask follow-up questions, explore alternative fixes, and even have Claude generate the remediation PR. This turns a security finding into a learning moment.

3. The prompt matters. The security review prompt we use is specific and structured. We tell Claude exactly what to look for, how to prioritise findings, and what format to use. A vague prompt gives vague results.

4. False positives require active management. The first few weeks involved some noise: findings that were technically correct but contextually fine for our codebase. Each bypass comment we added taught the system our risk tolerance. The rate has dropped noticeably as we’ve built up that history. Budget time for this calibration phase; it’s worth it.

5. Start with security, expand from there. We started with security-focused reviews because the cost of missing a vulnerability is high. But the same infrastructure works for code quality, performance, accessibility, or any other review concern. The prompt is the only thing that changes.

Claude Code handles the pattern-matching, such as known vulnerability classes, OWASP Top 10, common misconfigurations, so that when a human reviewer sits down, the obvious issues are already caught, and the conversation can focus on business logic and threat modelling.

The goal isn’t to replace security engineers. It’s to give every developer access to a security-aware reviewer on every single PR, so that when the human reviewer sits down, the obvious issues are already caught, and the conversation can focus on the hard stuff.

Security at scale isn’t about hiring more reviewers. It’s about making every review count.

For more details, see the official Claude Code Action documentation.

Vishal Panchani is a Product Security Tech Lead at Deriv.

Follow our official LinkedIn page for company updates and upcoming events.

Explore Deriv careers.